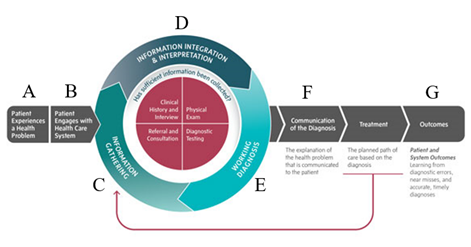

Consider the following scenario in which artificial intelligence (AI) is embedded into each step of the diagnostic process (Figure 1).

(A) A 30-year-old man is on a walk with his dog when his smartwatch alerts him that he might be experiencing atrial fibrillation. Concerned, the man pulls out his phone and asks an AI chatbot for guidance. The chatbot advises him to monitor his symptoms closely and contact a cardiologist.

(B) The man sends a message to his doctor through the patient portal, and the physician replies with an AI-generated response containing next steps and books an appointment.

(C) Before the visit, the doctor reviews an AI-generated clinical summary of the patient’s medical history, flagging a prior smoking habit.

(D) During the consultation, an ambient digital scribe records the conversation, allowing the doctor to focus on clinical decision making. Based on the AI-compiled information, the physician recommends a CT scan.

(E) An AI algorithm identifies that the patient’s scan contains left atrial scarring. The physician consults a specially trained large language model (LLM) to explore how such a result would impact potential treatment pathways: ablation versus antiarrhythmic drug therapy.

(F) After the visit, an AI-generated discharge summary is provided, advising the patient on potential lifestyle modifications.

(G) Days later, an AI-driven system calls to check in on the patient’s progress and gather feedback on their experience.

Figure 1: NASEM diagnostic process map

At first glance, this scenario appears futuristic—like a science-fiction depiction of things to come—but it, in fact, captures the current capabilities of the AI-embedded diagnostic process. While perhaps not all of the AI-enabled technologies described in the scenario may be utilized by a single institution or experienced by a single patient, similar technologies are commercially available and many are already seeing broad use. AI-enabled clinical decision support tools such as early sepsis prediction algorithms and various radiology assisting tools are commonplace in hospital systems nationwide, with many more AI-enabled technologies expected to gain wider use in the next few years, including ambient digital scribes, AI message drafting tools, and AI care coordination agents, among others. The promise of these technologies to improve diagnostic accuracy, increase efficiency, and reduce burnout are major drivers behind why we are in the midst of AI transforming healthcare.

What remains unclear, however, is whether the integration of these AI technologies will lead to realized benefits. While many of these technologies are touted as timesaving, burden-reducing, or life-improving tools, achieving these benefits requires successful adoption and utilization by users (often clinicians). The rapid integration of AI-enabled technologies is often misaligned with the understanding of their value and risks among patients, clinicians, and hospital leadership.1–4 Transparency, explainability, fairness, accuracy, usefulness, and risk of harm are all factors that influence the adoption of AI-enabled technologies,5 yet these attributes of AI technologies are often unknown or not communicated to providers. Providers have expressed concerns with using AI including trust in accuracy, lack of knowledge on how to use, and increased patient safety risk.6,7

To capitalize on the promises of AI-enabled technologies to improve diagnosis, it is imperative that those who use them, and are affected by them, have an understanding of how those technologies work and the risks they present. Clinicians over-trusting AI-enabled technologies may reap the most benefits (i.e., save the most time and effort by deferring to AI decisions) but incur the greatest risks for additional patient harm by being less likely to catch AI mistakes. Clinicians under trusting AI-enabled technologies, fearing the additional risk, may result in no benefit gains by spending additional time and effort reviewing every AI decision. This Issue Brief seeks to alleviate the AI-knowledge gap by elucidating what AI is, how it works, its potential benefits, its expected risks, and future directions.

The intent of this issue brief is to meet the following goals:

- Hospital leaders will be better prepared to evaluate, implement, monitor, and govern AI technologies to support safe, effective, and equitable care.

- Clinicians will be better equipped to critically assess AI tools, use them effectively in practice, and communicate clearly with patients about their benefits and risks.

- Patients will be empowered to ask informed questions about the use of AI in their care and to actively participate in ensuring its safe and transparent use.